In this topic we will cover the various white box testing techniques:

– Statement- Coverage

– Branch- or- Decision- Coverage

– Multiple- Condition- Coverage

– Loop- Coverage

– Call- Coverage

– Path- Coverage

These are very simplified so that any Black-box tester can understand this easily.

Why Test Designing?

The Quality of Testing is as good as its Test Design

Usage of formal and customized Test Specification techniques for deriving Test Cases from the Input Documents (Test Basis) will help in achieving the following:

– Proper Coverage/Depth in testing each of the functions (/system)

– Test Specifications prepared by various members in the test team will be uniform

– Test Cases will be more Manageable.

Understanding test design techniques:

– Classic distinction – black-box and white box techniques

– Black box – Black-box techniques (also called specification-based techniques) are a way to derive and select test conditions or test cases based on an analysis of the test basis documentation, whether functional or non-functional, for a component or system without reference to its internal structure.

– White box – White-box techniques (also called structural or structure-based techniques) are based on an analysis of the internal structure of the component or system.

Structure based or white box techniques

– Structure-based testing/white-box testing is based on an identified structure of the software or system, as seen in the following examples:

i. Component level: the structure is that of the code itself, i.e. statements, decisions or branches.

ii. Integration level: the structure may be a call tree (a diagram in which modules call other modules).

iii. System level: the structure may be a menu structure, business process or web page structure.

Structure based or white box techniques:

– Statement- Coverage

– Branch- or- Decision- Coverage

– Multiple- Condition- Coverage

– Loop- Coverage

– Call- Coverage

– Path- Coverage

Statement Coverage:

– Testing to satisfy the criterion that each statement in a program to be executed at least once during program testing. Coverage is 100 percentage when a set of test cases causes every program statement to be executed at least once.

– The chief disadvantage of statement coverage is that it is insensitive to some control structures.

Example:

1 int select ( int a[], int n, int x)

2 {

3 int i = 0;

4 while ( i < n && a[i] < x )

5 {

6 if (a[i] < 0)

7 a[i] = – a[i];

8 i++;

9 }

10 return 1;

11}

One test case n=1, a[0]=-7, x=9 covers everything ,

Flow 1 – > 2 – > 3 – > 4 – > 5 – > 6 – > 7 – > 8 – > 9 – > 10 – > 11

Branch or Decision Coverage:

– A test coverage criteria which requires that for each decision point or each possible branch be executed at least once.

Example:

1 int select ( int a[], int n, int x)

2 {

3 int i = 0;

4 while ( i < n && a[i] < x )

5 {

6 if (a[i] < 0)

7 a[i] = – a[i];

8 i++;

9 }

10 return 1;

11 }

Test Data:

| Branch coverage |

i |

n |

x |

a[i] |

Branch Outcome |

| while ( i < n && a[i] < x ) |

0 |

1 |

9 |

-7 |

TRUE |

|

0 |

1 |

7 |

9 |

FALSE |

Flow A : 1 – > 2 – > 3 – > 4 – > 5 – > 6 – > 7 – > 8 – > 9 – > 10 – > 11

Flow B : 1 – > 2 – > 3 – > 4 – > 10 – > 11

Multiple Condition Coverage:

– A test coverage criteria which requires enough test cases such that all possible combinations of condition outcomes in each decision, and all points of entry, are invoked at least once.

– A large number of test cases may be required for full multiple condition coverage

Example:

1 int select ( int a[], int n, int x)

2 {

3 int i = 0;

4 while ( i < n && a[i] < x )

5 {

6 if (a[i] < 0)

7 a[i] = – a[i];

8 i++;

9 }

10 return 1;

11 }

Test Data :

| Multiple Condition Coverage |

i |

n |

a[i] |

x |

Outcome |

| while ( i < n && a[i] < x ) |

0 |

4 |

-10 |

10 |

True |

|

0 |

4 |

10 |

10 |

False |

|

0 |

-4 |

-10 |

10 |

False |

|

0 |

-4 |

10 |

10 |

False |

| if ( a[i] < 10 ) |

– |

– |

-10 |

– |

True |

|

– |

– |

10 |

– |

False |

Flow A : 1 – > 2 – > 3 – > 4 – > 5 – > 6 – > 7 – > 8 – > 9 – > 10 – > 11

Flow B : 1 – > 2 – > 3 – > 4 – > 10 – > 11

Flow C : 1 – > 2 – > 3 – > 4 – > 5 – > 6 – > 9 – > 10 – > 11

Loop Coverage:

– A test coverage criteria which checks whether loop body executed zero times, exactly once or more than once.

Example:

main ( )

{

int i, n, a[10],x;

printf (“Enter the values”);

scanf (“%d %d %d %d”, &i, &n, &a[i], &x);

while ( i < n && a[i] < x )

{

if (a[i] < 0)

a[i] = – a[i];

i++;

}

printf (“%d” , a[i] );

}

Test Data :

| Loop Coverage |

i |

n |

x |

a[i] |

loop called |

| while ( i < n && a[i] < x ) |

0 |

4 |

5 |

10 |

0 times |

|

3 |

4 |

5 |

-10 |

1 time |

|

1 |

4 |

5 |

-10 |

3 times |

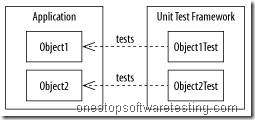

Call Coverage

– A test coverage criteria which checks whether function called zero times, exactly once or more than once.

– Since probability of failure is more in function calls, each function call is executed.

Example:

main ( )

{

int a, b, i ;

printf (“Enter the value of a, b, i”);

scanf (“ %d %d %d “, &a ,&b, &i);

if ( i < 10 )

{

sample ( a, b);

i = i + 1;

}

}

sample ( int x , int y )

{

If ( x > 10 )

x = x + y ; break ;

if ( y > 10 )

y = y + x ; break ;

}

Test Data:

| Call Coverage |

a |

b |

i |

function called |

| sample ( int x, int y ) |

2 |

4 |

10 |

0 times |

|

2 |

4 |

9 |

1 time |

|

2 |

4 |

7 |

3 times |

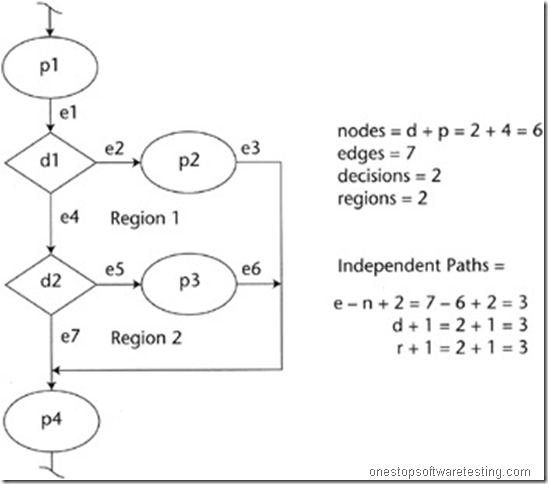

Path Coverage

– Testing to satisfy coverage criteria that each logical path through the program be tested. Often paths through the program are grouped into a finite set of classes. One path from each class is then tested

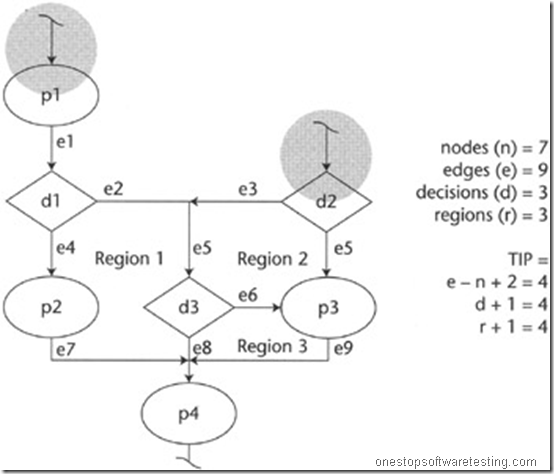

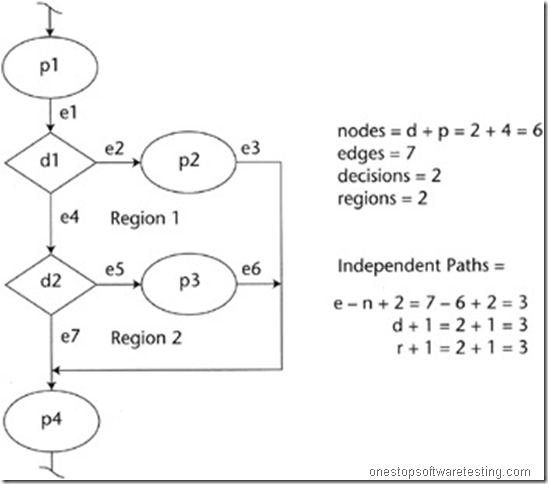

– Helpful to find out Cyclomatic complexity, minimum number of test cases depend upon Cyclomatic complexity

– General coverage requires executing all paths, number of paths may be infinite if there are loops

– To find out valid logic circuit with predefined rules

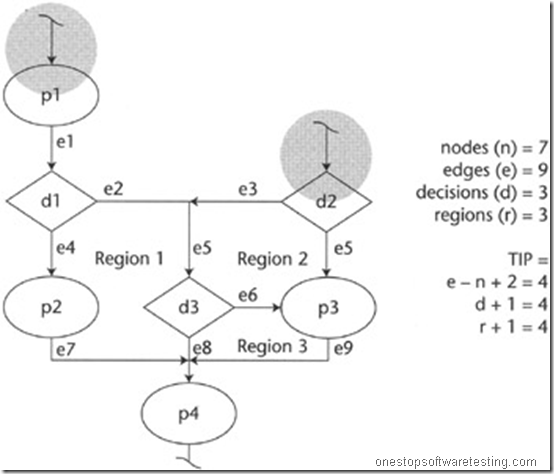

Is this a valid logic flow circuit?

No.

Why not? It has two entrances.

Rule:

You can only have one entry point and one exit point in structured system.

The system is not a valid logic circuit, because it’s not a structured system. It requires five linearly Independent paths to cover this system.

The calculated value is erroneous.

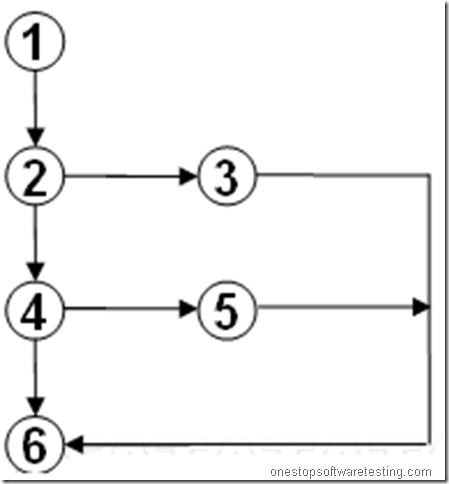

Example:

Linear Independent Paths

Path 1 -> p1 – d1 – d2 – p4

Path 2 -> p1 – d1 – p2 – p4

Path 3 -> p1 – d1 – d2 – p3 – p4

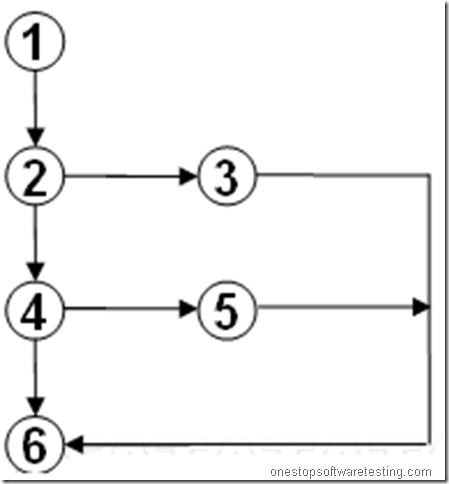

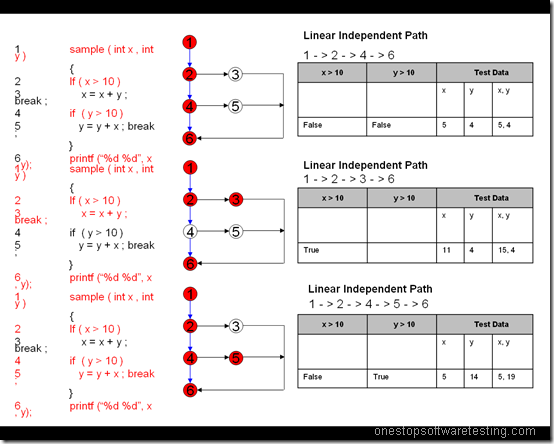

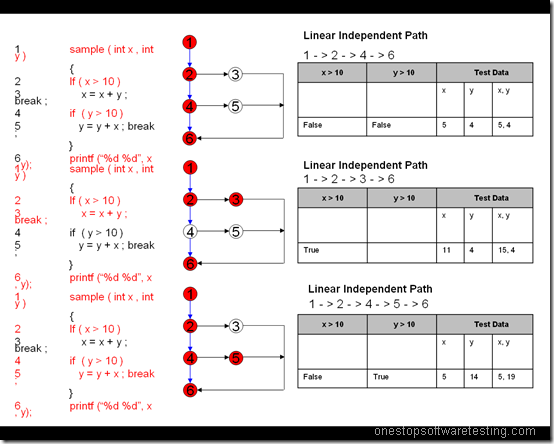

Sample Program:

1 sample ( int x , int y )

{

2 If ( x > 10 )

3 x = x + y ; break ;

4 if ( y > 10 )

5 y = y + x ; break ;

}

6 printf (“%d %d”, x , y);

Recent Comments