Lets Make Software Testing Fun

I have been in software testing for quite some time, at times it sucked, because usually its boring and sometimes the testing activity is looked down as a menial job done by a less skilled person.

Lets make an attempt to overcome the above mentioned sucking factors. To implement them, apart from individual testers effort,a large and important part has to be played by the management.

i. Testers right attitude: First realize how important testing activity is.No matter how much developing effort has been spent on making a highly desirable product with state of the art technology, if it does not correctly do what it is supposed to do, then it is doomed to death.An improperly tested application reaching customers directly translates to loss of business.

The right attitude of a tester is he/she must find satisfaction or joy in hunting down bugs and vice versa. My uncle sam who happens to be a hard core tester gave me this inspiring speech – “When a product comes for testing, you must pounce on it, use every method to break and destroy the system, you must inundate the developer with so many bugs, that he must curse himself why he has developed that product and why he did not find those bugs earlier .Destroy the product utterly and completely.Then you can right fully claim the title ‘Barbaric tester’.You must find that sadistic joy.Never ever assume that the product that comes for testing is bug free. In the vast land of the product code, the treasure of bugs are hidden somewhere and everywhere, now its your responsibility as a tester to dig them up, discover them and claim your treasure.”

Hmmmmmmm, developers, pardon my uncle SAM, my fellow comrades(testers) don’t take my uncle’s advice literally, the point is add a bit of imagination and big titles, see the testing activity in a new perspective and have fun.

ii. Usage of Automation tools: Manual testing is sometimes boring, but running the same set of test cases again and again, every time a bug is fixed in the name of ‘regression testing’, damn life sucks.

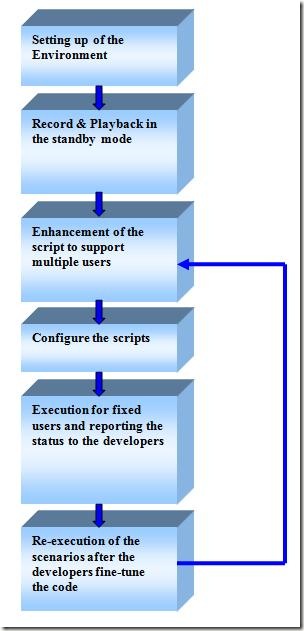

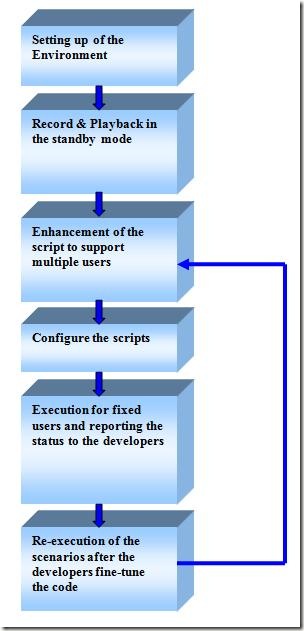

If a test case has to be run several times, automate it using tools like Win-runner or Rational robot.There are significant advantages of automating them – first, you can run them over night and come in the morning and check results, this way it saves a lot of time and effort.Second, since it sucks to execute the same test manually again and again, there is a higher probability that the tester in his half-wake state may miss a regression bug, Whereas the dumb automated test case checks all the check points every time you run it.

Try to Convince your management on purchasing Automation tool’s license.May be go for a pilot project initiative using trial version of the software.

iii. How to make bug hunting fun: The management should create healthy competition between testers.They must find a way to make their testers aggressive and make finding bugs a fun filled activity. Here is one way that comes to my mind:

-A ‘big bug board’ should be hung in a place visible to the entire team.The board should contain all the testers name and the number of valid bugs logged by them, which is updated each week.Every week, ranks should be awarded to the testers based on the bug count.The tester who logged most number of bugs should be ranked number one.Optionally he/she must be given a big title say ‘Tester of the week’.

iv. A little change once in a while: If a tester is confined to a single product or single module within a product for a long time, then it will suck. Once in a while he should be allowed to have some change(upon tester’s desire) either in terms of moving to a different product or some different kind of work like a pilot project of some new tool.

v. Equal Status: Any organization that understands the importance of customer satisfaction, knows the importance of the testing the product properly, before shipping it to customer.The management also have to realize that testing needs skill.The goal of any testing project is to test the product as thoroughly as possible, consuming as less resources as possible(time and man power).It needs skill and experience to design test cases that are small but tests large chunks of code in a product, that is easy to execute but catches a lot of bugs. It needs skill to identify weak areas in order to allocate more resources to it.It needs skill to use automation tools, test management tools, code coverage and performance testing tools.It takes some time for any new entrant into testing field to be a ‘trained bug catcher’ and experience in this field is valuable.

Management has to take explicit measures to provide equal status to testers.The following are some of the them:

- For all the important meetings related to a product , testing team should be invited.Their views must be considered while taking important decisions like when to release the product?

- A separate career path has to be created for testers.They must be made equal to a developer in terms of pay and position.

- Management has to allocate adequate funds as priority, to testing team to create adequate infrastructure

- Training programs has to organized for new entrants in testing field, to understand testing techniques and to learn the usage of testing tools.

Recent Comments